One of the most important developments in the Revolution in Military Affairs (RMA) is the integration of artificial intelligence (AI) into defense technology and network systems, significantly increasing the operational efficiency of modern weapons platforms.

However, AI-driven systems have inherent vulnerabilities. One such vulnerability is deepfakes, digitally fabricated content that can compromise national security.

Deepfakes have emerged as a growing concern across the security sector, and nowhere more so than in the air and missile defense space, especially given India’s complex threat environment.

What is a deepfake?

Deepfakes are digitally manipulated content, video, audio, or images designed to spread misinformation. State and non-state actors alike can misuse deepfake technology to fabricate malicious situations and statements.

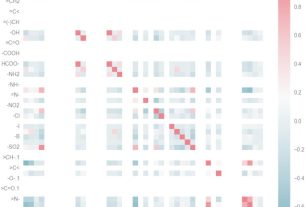

These capabilities are enabled by machine learning, and in particular deep learning, which allows attackers to reconstruct or modify events in ways that serve strategic objectives.

How is deep learning used in missile and missile defense networks?

Deep learning has fundamentally transformed missiles and missile defense networks, transforming them into adaptive, intelligent systems that can operate across diverse threat environments. These technologies transform real-time situational data into decisive operational outputs.

A key challenge in any missile engagement is accurately predicting flight trajectories, which can be significantly deviated by external variables. Deep learning addresses this problem by enabling accurate trajectory analysis of enemy missile systems, thereby reducing the risk of fatal miscalculations.

Neural networks further improve performance by allowing missile systems to distinguish between genuine targets and countermeasures, reducing margins for error and compressing engagement schedules.

Deepfakes of missiles and missile defense networks

Deepfake technology leverages the same deep learning technology that powers missile defense systems, but it also has a destabilizing effect on missile defense systems. When fabricated content is introduced into missile and missile defense strategies, it can lead to dangerous confusion and miscalculation.

Even a traditional first strike could escalate into a full-scale conflict if decision makers are acting on false threat assessments shaped by deepfake content.

Fabricated audio and video of leaders of nuclear-weapon states appearing to approve of the use of nuclear weapons can be produced and disseminated with relative ease, even in scenarios where such action is not truly justified.

In actual combat operations, deepfakes could be deployed to depict the destruction of critical assets that actually remain completely intact. States possessing such assets could use fabricated evidence of their destruction to justify offensive attacks. Conversely, adversaries can disseminate such images to undermine public morale and political will.

Information warfare is a central pillar of hybrid conflict and is becoming increasingly dominant in contemporary security issues. Deepfakes simulating missile attacks are frequently used to spread disinformation and worsen the security environment.

As the Iran-Israel-US 2026 campaign shows, such content can become the norm for content creators, and it has also become a means of monetization for opportunistic content creators.

States could also misuse deepfake technology during missile tests to hide their true capabilities from adversaries. In a crisis situation, fabricated images of attacks can be used to trigger false alarms, overwhelming air and missile defense networks in response to non-existent threats.

This burden will be especially acute given the already severe challenge of simultaneously intercepting drones and missiles. Beyond a mock attack, deepfake footage depicting the removal of a senior missile force commander could cause significant strategic and psychological disruption.

In active conflict environments, fabricated footage of missile attacks can trigger panic-driven civilian actions such as stockpiling essential supplies. As a result, shortages of food, medicine and basic hygiene products can further increase the humanitarian burden of conflict.

Social media remains the primary vehicle for the rapid spread of deepfake content related to missile attacks and interceptions. Such material spreads quickly and is often accepted as genuine.

As AI capabilities become more sophisticated, distinguishing between fabricated content and real footage will become increasingly difficult for the general public, and sometimes even policy makers.

conclusion

In an era of accelerating technological change, military planners and policymakers face increasing challenges from adversarial applications of AI-driven tools.

Deepfakes are one such challenge, and they are not limited to the military field. Ordinary individuals can create and share this content through social media, amplifying instability far beyond what a single state actor could achieve on its own.

Addressing this challenge requires a coordinated effort across technical, doctrinal, and policy dimensions.

Devalina Ghoshal is the author of the book ‘The Role of Ballistic and Cruise Missiles in International Security’.

She is also a regular commentator on missile issues.