The global video economy has reached a tipping point. Now, with streaming platforms offering 4K as a baseline and 8K broadcasts entering commercial trials, viewers’ visual fidelity expectations have never been higher. But beneath the surface of polished content libraries lies a stubborn operational reality. The majority of existing video assets, including legacy film, user-generated content, security footage, and brand archives, remain locked in suboptimal resolutions and suffer from compression artifacts, noise, and unstable frame rates.

Traditional repair and upscaling workflows have long been the only solution, but they come with tight cost structures. One hour of broadcast-ready upscaling can take days of skilled labor and requires experts to navigate complex parameter sets in applications like DaVinci Resolve and Adobe After Effects. Hardware demands are equally formidable. Render farms and dedicated workstations combine capital that many independent creators and mid-sized studios can’t justify. As a result, there is a continuing gap between the amount of content that needs to be enriched and the human and computational resources available to process it.

In this gap, AI video enhancer has been moved from an experimental utility to a production infrastructure. These systems apply deep learning models, particularly convolutional neural networks and transformer-based architectures, to temporal and spatial reconstruction to automate tasks that once required laborious manual supervision. The result is not just faster processing, but a fundamental reconfiguration of what post-production teams can promise in terms of quality and speed.

Core features of the latest AI video enhancer

When evaluating AI video enhancers, four technical pillars define their usefulness: A rigorous evaluation of an AI video enhancement tool starts with its architecture. Today’s platforms typically offer four integrated integration capabilities that, when combined, replace separate stages in a traditional pipeline.

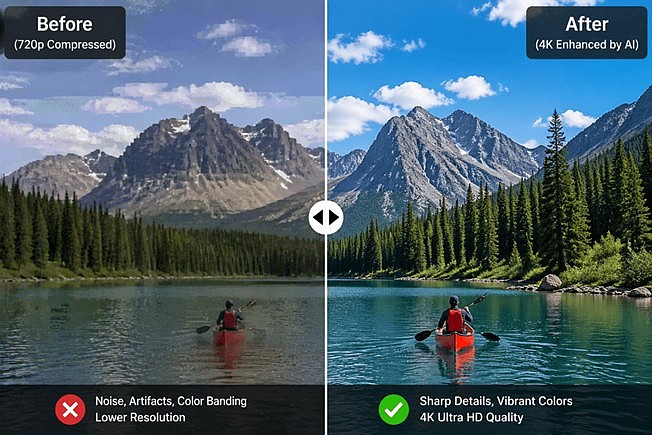

- First, super-resolution reconstruction allows standard-definition or high-definition sources to be scaled to 4K or 8K without the blurring inherent to bicubic interpolation. Instead of stretching existing pixels, generative models infer high-frequency details by training on large datasets of pairs of low-resolution and high-resolution frames. As a result, edge integrity and texture fidelity are maintained, which are ignored by traditional algorithms.

- Then, intelligent denoising and artifact suppression address blockiness and mosquito noise typical of highly compressed footage. Rather than applying a uniform blur filter, the AI-driven system analyzes motion vectors and scene semantics to distinguish noise from legitimate detail, smoothing out flat areas while preserving grain structure where appropriate.

- Third, optical flow and deep learning frame interpolation improve temporal resolution, converting 24 fps or 30 fps material to 60 fps or 120 fps. This is especially important for sports archives, gaming content, and slow-motion reconstructions where motion smoothness has a direct impact on viewer retention.

- Finally, modern systems emphasize pipeline automation. Batch processing, API access, and low-code integration allow you to ingest, process, and deliver entire libraries without having to manually adjust each clip. For organizations managing thousands of assets, moving from craft-based iteration to automated throughput represents the biggest efficiency gain.

Cross-industry applications: Measurable value beyond editorial scope

The practical impact of AI video enhancers is most apparent when considered in specific areas. This technology is driving quantitative changes in cost, speed, and output quality across film preservation, digital commerce, and public safety.

Film archive restoration: How organizations use AI to improve video quality to 4K

Cultural institutions and distribution institutions face an urgent preservation crisis. Analog film masters and early digital telecine transfers are often stored in 480i or 720p formats, which deteriorate with each passing decade. Traditional photochemical restoration involves scanning, manual stain removal, color timing, and optical printing, which can take several weeks per feature.

Introducing an AI video enhancer within this workflow can make a huge difference in economics. After the initial scan, a machine learning model handles most of the upscaling, scratch reduction, and stabilization to produce a 4K master that retains the organic texture of the original celluloid. A human colorist then steps in to do creative grading rather than remediation. Institutions such as the National Film Archive in several European markets have reported that restoration schedules for some catalog titles have been compressed by 60-70%, making budgets available to a larger portion of their collections.

E-commerce and branded content at scale: The role of AI quality improvers

Global brands now operate content matrices that span dozens of markets and languages. User-generated reviews, influencer collaborations, and rapid-response social campaigns generate thousands of clips each month. These clips are often obtained from mobile devices with unstable lighting or codec compression. It is operationally impossible to manually increase this volume to broadcast-safe standards.

Ann AI quality improvement tools It acts as a normalization layer here. By ingesting mixed-resolution sources and applying consistent enhancement profiles (sharpening, stabilization, dynamic range adjustment), marketing teams can enhance visual brand standards without increasing headcount. Early adopters in the consumer electronics space have recorded approximately 40% reductions in post-production costs while reducing time-to-platform for regional campaigns from days to hours.

Surveillance and industrial vision: Detailed recovery under constraints

Beyond the creative industries, public safety and manufacturing operations rely on video evidence and visual inspection. Low-light surveillance, traditional CCTV hardware, and bandwidth-limited remote feeds routinely produce insufficient footage for license plate recognition or defect analysis.

In this situation, AI video enhancer technology focuses on restoring details rather than polishing beauty. Specially trained enrichment models based on surveillance datasets can reconstruct readable text, facial shapes, and surface anomalies from sources previously considered unusable. For industrial quality control teams, this means refurbishing existing camera networks rather than overhauling expensive hardware, providing a practical bridge between traditional infrastructure and modern diagnostic requirements.

Technology trajectory: solving structural production problems

The maturation of AI video enhancer technology has solved three structural constraints that have shaped the post-production industry for decades.

- The first is the democratization of hardware. Cloud-based inference and optimized local silicon, especially GPU and NPU architectures, have broken down the walls of capital. Processes that once required dedicated rendering farms can now be run on high-end workstations or pay-as-you-go cloud instances, giving independent filmmakers and regional institutions access to professional-grade enhancements.

- The second is a shift from post-correction to real-time enhancement. The new pipeline integrates extension modules within a live production switcher and cloud-native editing platform. In live streaming and remote collaboration scenarios, this means viewers can receive an upscaled, stable feed without the latency penalties that once made real-time processing impractical.

- Third, and perhaps most important, is the decline in the lack of expertise. Traditional color science and restoration techniques take years to master. While creative judgment remains invaluable, the mechanical burden of pixel-level reconstruction is increasingly borne by algorithms. AI video enhancer allows senior specialists to focus their attention on editorial decisions and client strategy rather than repetitive technical labor.

Deeper signals of productivity redistribution

The proliferation of AI video enhancers suggests something more than incremental improvements in software. This marks a reallocation of production capacity from well-capitalized studios to decentralized creators and lean organizations.

The competitive landscape changes when quality is no longer limited by access to expensive equipment or rare technical expertise. Independent documentary makers can perform archive-level restorations. E-commerce teams in emerging markets can match the visual sophistication of their multinational competitors. Security departments can extract actionable intelligence from legacy hardware without waiting for municipal budget cycles.

Importantly, this democratization does not devalue human contributions. On the contrary, automating the deterministic aspects of enhancements such as noise profiles, scaling kernels, and frame blending increases the importance of creative direction, narrative judgment, and strategic delivery. Technology sets a higher baseline, but the work of differentiation continues to be done by humans.

final thoughts

AI Video Enhancer is no longer an experimental plugin. It is becoming the foundational infrastructure for organizations with video as a core asset. Its value proposition is clear. Compress timelines, standardize quality at scale, and extract utility from previously degraded sources.

For teams evaluating adoption, the deciding factors should be model transparency, hardware compatibility, output codec support, and licensing clarity (especially for commercial redistribution). The market is rapidly moving away from general-purpose models to domain-specific models optimized for color science in film, surveillance, or e-commerce.

Going forward, the most productive workflows will not be those that seek to replace human editors with automation, but those that position AI video enhancers as first-pass engines. It is humans who set creative intentions. The machine processes the mathematics of pixels. In that division of labor, efficiency and quality eventually cease to be opposing forces.