A recent Illinois federal class action lawsuit alleges that AI healthcare companies acquired health data during acquisitions and shared that data with biotech and pharmaceutical companies in violation of key privacy laws. MedTech companies and their business customers should take note. The April 15 lawsuit targets Tempus AI, a Chicago-based healthcare technology company, stemming from its February 2025 acquisition of a genetic testing company with a database of more than 1 million genetic tests. The plaintiffs allege that Tempus AI forced the acquiring companies to turn over their entire cache of genetic testing data shortly after the acquisition, and then licensed its data to more than 70 pharmaceutical and biotech partners through agreements totaling more than $1.1 billion, all without notifying them or obtaining written patient consent. What do employers and medical technology companies need to know about this new attack vector that we’re likely to see more of in the near future?

lawsuit claims

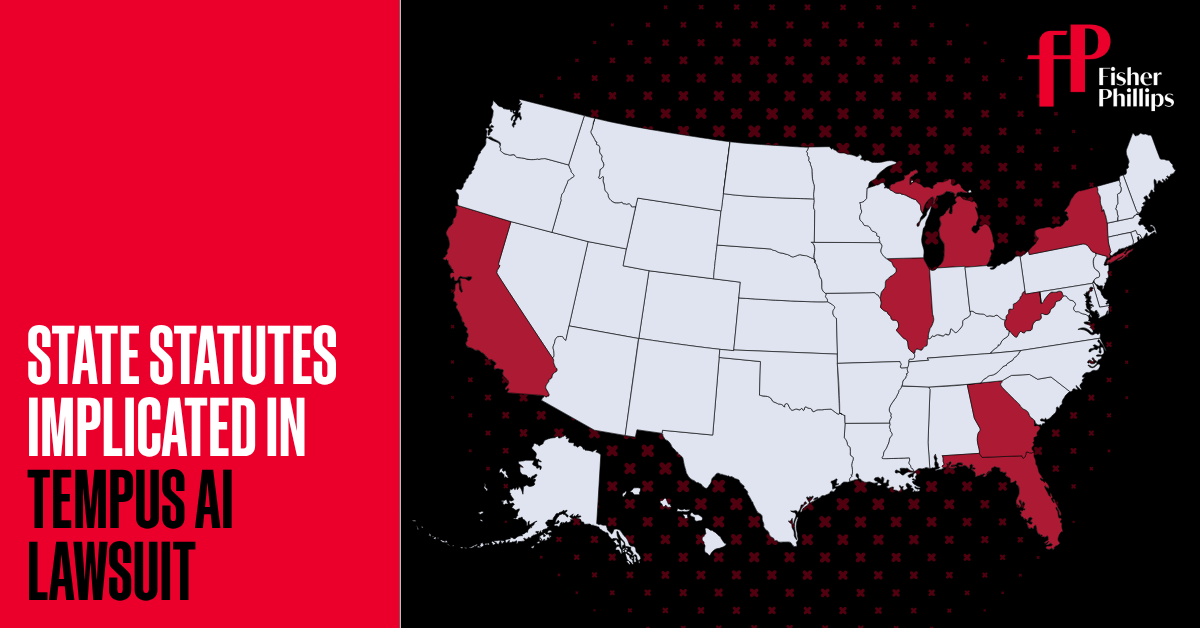

The seven plaintiffs, named from states across the country (Illinois, California, New York, Michigan, Florida, Georgia, and West Virginia), allege that they provided genetic information to Ambry Genetics Corporation for medical testing purposes with the expectation that it would be treated as confidential. When Tempus AI completed its $600 million acquisition of Ambry, their data was wiped and transferred without notice or consent and was commercially exploited as training data for Tempus AI’s artificial intelligence tools and as a licensed data set for third-party life sciences companies, the complaint alleges.

The complaint alleges that Tempus AI’s revenue will increase by more than 83% in 2025 compared to the previous year, with much of that increase coming from the commercialization of Ambry genetic data. Plaintiffs further claim that Tempus AI’s public statements that it only shares “anonymized” data are unsubstantiated. They argue that genetic data is inherently identifiable and cannot be meaningfully anonymized, no matter what labels are removed.

The legal claims cover a wide range of state laws, including Illinois’ Genetic Information Privacy Act (GIPA), California’s Medical Information Confidentiality Act, and consumer protection laws in Florida, Georgia, Michigan, New York, and West Virginia. The complaint also includes common law claims of negligence, unjust enrichment, fraudulent concealment, diversion, invasion of privacy, breach of contract, and breach of fiduciary duty.

genetic data angle

What makes this case particularly significant is the nature of the data involved. Genetic information is a particularly sensitive category under the law. The plaintiffs argue that Congress knew this when it passed the Genetic Information Nondiscrimination Act (GINA) in 2008. Unlike social security numbers that can be changed or passwords that can be reset, DNA is permanent, highly personal, and predictive of health outcomes that extend to biological relatives. Since then, more than a dozen states have enacted additional genetic privacy protections.

The lead statute in this case, Illinois’ GIPA, is one of the most stringent. Written permission is required to disclose genetic test results to anyone other than the person being tested. The law provides for statutory damages of $15,000 for intentional or reckless violations and $2,500 for negligent violations. The toll of such an incident can be staggering, as the number of classes can potentially reach hundreds of thousands of people.

Why this incident could be a turning point

If you’ve been following AI-related privacy cases over the past two years, this case may look familiar. This pattern mirrors what we’ve seen with AI call recording and transcription services. Lawsuits against platform developers came first, followed quickly by lawsuits against enterprise customers, the companies that purchased and deployed the tools.

Tempus AI’s complaints are noteworthy because the company serves major health systems, research institutions, and life sciences companies, and has publicly touted the breadth of its data-sharing partnerships. In previous cases, plaintiffs’ lawyers used vendors’ websites, press releases, and earnings reports to identify downstream customers, arguing that those customers knew or should have known that the platforms they used put them in legal jeopardy.

Healthcare and medtech companies that license AI-powered diagnostic, research, or clinical decision support tools can be sued as named defendants simply because they are known customers.

4 things employers and medtech companies should do now

- beginning, Audit your relationship with your AI vendor. If your organization uses AI-powered health, diagnostics, or research tools, find out exactly what data flows through your organization and where it goes, including whether that data is used to train models or licensed to third parties.

- Number 2, Review vendor contracts. Does the contract with the platform provider include representations regarding consent, anonymization, and downstream use of data? What data can the vendor’s employees access? Are there indemnification provisions that protect the organization if the vendor’s actions are later found to be illegal?

- Third, Know your state law obligations. Plaintiffs’ attorneys purposefully assembled a national class that corresponded to various state privacy regimes. If you operate in California, Illinois, New York, or other states with robust genetic or health data privacy laws, you are at higher risk and have a greater obligation to stay up to date with local requirements.

- Fourth, monitor litigation. If this lawsuit advances to class certification and survives initial litigation, we can expect to see a wave of similar lawsuits targeting not only platform developers but also healthcare employers and medical technology companies that incorporate AI tools trained on patient data without verified consent.