By Stephen Whitfield, Senior Editor

Rig power demand can fluctuate rapidly throughout the well construction process – for example, in North American unconventionals, where operations have become far more power intensive and variable over the past decade. Drilling contractors recognize that keeping more generators online than what’s required for operational purposes wastes fuel and unnecessarily increases equipment wear. On the other hand, they also know that keeping too few generators online risks power limitations that can interrupt drilling. Further, they understand that generators perform most efficiently when operating near their rated output.

Therefore, to achieve the highest levels of performance and efficiency with engine and fuel management, generator configurations need to be constantly adjusted according to changing needs. Yet, although companies recognize this as an opportunity to reduce fuel use, emissions and maintenance costs, generator management on most rigs today still relies heavily on manual judgment and labor.

“Looking at the demand we typically see from a rig, we can expect the number of generators we need for a given operation,” said Michael Zhang, Lead Data Scientist at Patterson-UTI. “We expect that usage to go up and down with the amount of power that the rig needs to use. But in reality, sometimes we’re just leaving the generators on the whole time, and there’s no adjustment. Maybe the rig crews are distracted or they’re dealing with other problems. We want to do this right and optimize the amount of generators running.”

Work has been ongoing in recent years to achieve this kind of optimization. Patterson-UTI had previously already rolled out rule-based control systems – where domain-specific knowledge forms the basis of a decision-making framework – to automate generator start and stop decisions based on measured load. While these systems improved reliability and reduced fuel usage compared with manual operations, they remained sensitive to tuning and could not always adapt well to complex load changes from various drilling operations.

More recently, advances in data acquisition and computing have enabled more sophisticated systems based on predictive and learning-based algorithms, mitigating previous issues with rule-based controls. At the 2026 IADC/SPE International Drilling Conference in Galveston, Texas, on 17 March, Mr Zhang discussed Patterson-UTI’s development and testing of one such system – a reinforcement learning (RL) network designed specifically for generator scheduling. This project, he said, represents the first reported field development of an RL network on land drilling rigs.

“Currently, if you look at a lot of drilling rigs, when we’re using rule-based optimization for generators, we set limits and rules for how we want to control these generators during different operating conditions. But what’s the next frontier? Where can we go from here? We believe that this kind of predictive, machine learning-based optimization is the next step forward,” he said.

Reinforcement learning models for generator management

RL is a machine learning (ML) approach where an autonomous agent (a learned decision-making controller) learns to make decisions by interacting with its environment. The agent is able to learn how to perform a task by trial and error, in the absence of any guidance from a human user. A self-driving car is an example of a system that uses this approach.

The RL model project builds upon previous work Patterson-UTI had done to integrate machine learning into generator management. In 2022, the company had developed an automated generator control system that utilized a rule-based ML advisory component to detect decreases in power demand and recommend when to shut down excess generators, reducing low-load operation. The control system could then automate generator shutdowns and restarts.

However, the ML advisory component lacked predictive capability. Because the system’s logic was rule based, it operated within boundaries set by human domain experts, and the only data it could use to make decisions were recent power readings on the rig – for instance, the control system would be advised to recommend a shutdown if power usage reached a certain threshold.

That rigid boundary led to instances where generators were shut down shortly before power load increased again, forcing drillers to wait while additional generators were brought back online. These mistimed shutdowns disrupted drilling operations and reduced operator confidence in the automation. As a result, many drillers chose to disable or override automatic shutdowns, preferring the predictability of manual control.

In contrast, RL agents learn solely from observed experience, without pre-established rules set by domain experts. It begins, effectively, with a blank slate. RL closely mimics natural learning, using feedback (rewards and penalties) to determine the best actions for achieving long-term goals without needing labeled data. This means RL agents could potentially anticipate power needs before they occur.

“With a learning-based system, you’re taking your operational data to determine how your system operates. We’re going away from needing subject matter experts to validate and tune the thresholds, like we would in a rule-based system,” Mr Zhang said. “The boundaries under which our system is operating are now learned through the data and implemented into these statistical-based models.”

RL provides a data-driven framework for optimizing sequential decisions based on experience. At each timestep, the agent observes the system’s state, selects an action and receives a reward – a feedback signal indicating that the agent made a good decision – that reflects fuel and operational efficiency. Over time, the agent learns a policy, or a general course of operations, that select actions are expected to maximize the total long-term reward.

In mathematics, this long-term policy is typically represented by the letter Q. The type of agent developed by Patterson-UTI is called a Deep Q-network, meaning that it approximates the learning of that policy using neural networks. This enables the agents to learn directly from operational or simulated data without requiring explicit mathematical models of the environment.

“The agent is observing different states on the rig. We’ll see things like rig power demand, the operational state, generator status and so forth. Then, it thinks about what it can do to maximize the long-term value for this rig,” Mr Zhang said. “It has options it can take at any given moment. It takes an action, it gets new information from the rig, and it asks that same question – how can I maximize long-term value? Eventually, the learnings from these actions – the rewards – accumulate over time. With these types of models, the key is learning how to optimize that long-term cumulative reward. Ultimately, it’s not just thinking about what it can do right now but also taking into account future states that come off of those decisions.”

Development and installation

To train the model, Patterson-UTI used historical data from 104 land rigs. For each rig, the company input into the model two months of surface data traces capturing total rig power demand, generator performance and contextual drilling parameters.

During training, the RL system would look at the data and make three control decisions: whether to add, remove or maintain the number of generators online. For each observed operating condition, the training process considered feasible generator adjustments and estimated their short-term impacts. The agent assessed the trade-offs among fuel efficiency, generator stability and reserve sufficiency. “The model essentially played through our historical data like a video game. It sees the data, makes decisions, and we analyzed how well we think it did for its decision. Going through that data, it learned how to react in different situations,” Mr Zhang said.

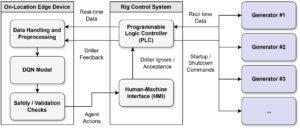

Once the model was trained and a working agent was created, Patterson-UTI developed a method to integrate the agents into its rigs’ control systems. Mr Zhang said the company focused on reusing its existing generator management framework as much as possible in order to minimize development and testing efforts. It also prioritized maintaining a consistent and familiar interface between the driller and the RL agent.

Real-time power data from each engine is typically transmitted to the central rig control system through programmable logic controllers (PLCs). However, while PLCs provide deterministic control with fast scan times, Mr Zhang said they are not well suited for memory-intensive or adaptive algorithms. Therefore, Patterson-UTI installed an edge device housed in the variable frequency drive (VFD) control house to perform higher-level decision logic. This server communicated bidirectionally with the rig PLC network and cloud data services. It continuously monitors generator load, calculates features and determines whether startup or shutdown actions are advisable.

“For the Patterson rigs where we deployed this system, we already had the controller logic in place for the rule-based recommendation system, and all the adapters for the edge device were already in place. So, for us to deploy, we essentially took out the logic for the rule-based decision making and replaced it with this RL model. From our standpoint, the implementation was pretty much drop in,” Mr Zhang said.

Field testing and future development

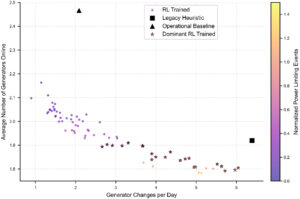

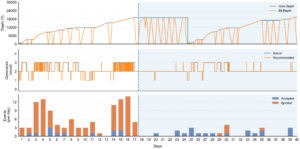

Field trials of the RL agent were conducted in 2023 and 2024 on nine land rigs that had previously used rule-based generator management systems. These rigs were selected for higher load variability, which Mr Zhang said would act as a stress test for the model. Within this more demanding environment, the RL agent was tested to see if it could maintain equivalent generator counts compared with the rule-based model while reducing shutdown frequency and advisory dismissals.

Reduced shutdowns while maintaining a similar level of generator run time, he added, translates directly to lower mechanical wear on engines and switchgear, reduced maintenance interventions and decreased wear on the pneumatic starting motor. A reduction in the number of ignored advisories would also be an important sign of the model’s improved learning capacity compared with a rule-based system.

“Anyone who is familiar with field operations knows the concept of alarm fatigue. If you receive 10 notifications in a day, you learn to just start ignoring them. You need to know that the system is providing meaningful recommendations that you can trust,” he said.

Results showed that generator shutdowns decreased by a median of 2.3 per day, while ignored advisories fell by 5.9 per day. Average generators online remained effectively unchanged (mean increase of 0.04 per day), indicating that the improvement in shutdowns and ignored advisories came from a better timing of actions.

Mr Zhang said that Patterson-UTI currently plans to expand deployment of the RL model to additional rigs in the company’s fleet. It’s also planning for future development focusing on further quantifying long-term fuel use and maintenance benefits. DC

For more information, please see IADC/SPE 230679, “Predictive Generator Management for Drilling Operations: A Deep Reinforcement Learning Framework.”