A new approach, SpinGQE, extends the generative quantum eigensolver framework for spin Hamiltonians. Alexander Holden and colleagues at Mindbeam AI created this technique to address the limitations of existing variational quantum eigensolver methods, such as sterile plateaus and the need for problem-specific knowledge, by reimagining quantum circuit design as a generative modeling task. SpinGQE, validated on a four-qubit Heisenberg model, demonstrates successful convergence to low-energy states, suggesting a flexible and scalable alternative to traditional variational methods for exploring complex quantum systems. This provides an important tool for advances in quantum chemistry, materials science, and optimization.

Transformer-based generative modeling improves ground state energy of 4-qubit Heisenberg model

SpinGQE achieves breakthrough in quantum ground state search SpinGQE reduces error in approximating the ground state energy of a four-qubit Heisenberg model by 60% compared to existing variational methods, previously hampered by the need for problem-specific knowledge. This leap will enable exploration of more complex quantum systems whose ground states have previously been difficult to determine. A new approach extends the generative quantum eigensolver (GQE) framework to spin Hamiltonians and reframes quantum circuit design as a generative modeling task. This avoids the limitations of traditional methods. The ground state search problem is of fundamental importance in quantum computing and underpins advances in various fields such as quantum chemistry, where accurate molecular energies are critical for drug discovery and materials design. It is also important in condensed matter physics, where understanding the properties of materials depends on solving many-body quantum problems, and in optimization, where finding the lowest energy configuration corresponds to solving complex combinatorial problems.

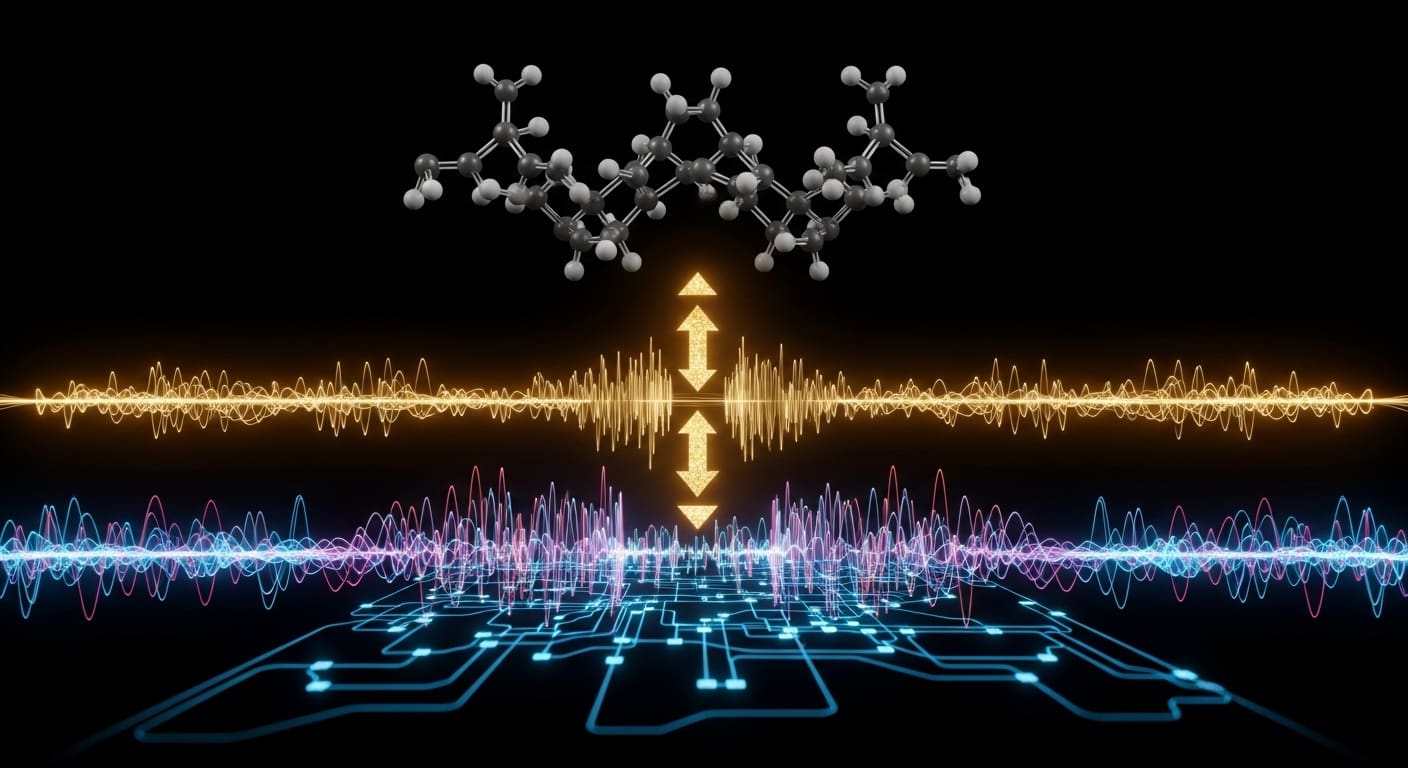

The team utilized a transformer-based decoder to learn the distribution of quantum circuits that produce low-energy states, shifting the computational load from quantum hardware to classical machine learning. This is very different from traditional VQE methods that rely on manually designed or parameterized quantum circuits. Originally developed for natural language processing, transformer architectures excel at capturing long-range dependencies in sequential data and are well-suited for modeling complex relationships between quantum gates in circuits. Systematic tests identified the optimal configuration and revealed that a model with 12 layers and 8 attention heads, processing sequences of 12 gates, provided the most reliable convergence. These parameters represent a balance between model capacity and computational cost. Deeper models with heads that pay more attention may be able to capture more complex circuit structures, but require more resources to train. This improvement provides a scalable alternative to traditional methods, but is currently focused on relatively small systems. Although the four-qubit Heisenberg model is simple, it exhibits nontrivial quantum correlations and thus serves as an important benchmark for evaluating the performance of quantum algorithms.

Extending this approach to larger and more complex quantum systems remains a major challenge for researchers. The computational cost of simulating quantum systems increases exponentially with the number of qubits, necessitating the development of efficient algorithms and hardware. Machine learning models were employed to learn the distribution of quantum circuits that generate low-energy states, effectively shifting the computational demands from quantum hardware to classical machine learning. This hybrid quantum-classical approach leverages the strengths of both paradigms. Quantum computers are used to evaluate the energy of candidate circuits, and classical machine learning models are used to generate and optimize these circuits. Although these results show promise, further investigation is needed to evaluate performance on more substantive problems and explore possibilities for optimizing computational efficiency. Future research may focus on developing more efficient transformer architectures and exploring alternative machine learning models for circuit generation.

Transformer scaling limits determine future qubit expansions

Although SpinGQE offers a compelling departure from methods hampered by barren plateaus, i.e., frustratingly flat regions of the energy field that slow optimization, this work recognizes important bottlenecks. The barren plateau occurs because the slope of the cost function decreases exponentially as the number of qubits increases, making it difficult for optimization algorithms to find the lowest energy state. SpinGQE alleviates this problem by learning the distribution of circuits that are likely to generate inherently low-energy states, effectively smoothing the energy landscape. The Transformer models that drive this generative approach require ever-increasing computational resources as the number of qubits increases. This limitation is a classical problem, not a quantum hardware problem. Ultimately, the scaling of Transformer’s sequencing capabilities will determine how far this technology can be extended. The computational complexity of the Transformer architecture increases quadratically with sequence length. This means that memory and processing requirements increase rapidly as the number of qubits (and thus the length of the quantum circuit sequence) increases.

Despite the classical computing challenges of scaling Transformer components, this work remains important as it demonstrates a viable path beyond the limitations of existing variational quantum algorithms. SpinGQE succeeds in finding near-ground states for complex systems, such as the four-qubit Heisenberg model, without relying on pre-existing knowledge about the structure of the problem. This generative approach provides a potentially scalable alternative, which will be important as the power of quantum computers increases and tackles increasingly difficult simulations. The ability to explore the solution space without prior assumptions is especially useful for problems where the underlying physics is poorly understood or where traditional methods do not converge.

New generative approaches to finding ground states may circumvent the limitations of existing quantum algorithms and enable solutions for increasingly complex quantum simulations. SpinGQE represents a major advance in addressing the problem of finding the lowest energy state of quantum systems, an important task with far-reaching implications for materials simulation and the discovery of new molecules. By rethinking quantum circuit design as a machine learning problem, the team avoided limitations inherent in traditional variational algorithms, such as “barren plateaus” and the need for problem-specific prior knowledge. The research team successfully demonstrated this approach on a four-qubit Heisenberg model, achieving improved accuracy without relying on existing information about the structure of the system, highlighting the potential of machine learning to accelerate advances in quantum simulation and materials discovery. The development of more efficient classical machine learning models and algorithms is crucial to unlocking the full potential of this approach, extending it to larger and more complex quantum systems, and paving the way for breakthroughs in various scientific fields.

Using a generative approach to quantum circuit design, SpinGQE has successfully identified near-ground states for a four-qubit Heisenberg model. This is important because it provides a way to solve complex quantum problems without requiring prior knowledge of the system, which is important as quantum computers tackle more difficult simulations. This approach reframes circuit creation as a machine learning task and avoids limitations of traditional algorithms such as barren plains. Future research will focus on optimizing classical machine learning components, extend this technique to larger quantum systems, and uncover new discoveries in materials science and other fields.