AI video tools promise complete control, but a hidden “entanglement of concepts” connects identity, expression, and behavior, forcing hacks and template tricks that shatter the myth of easy GenAI magic.

opinion Since I last sat down with this problem, the following problem has arisen: tangle of concepts Trained AI systems scaled to a much wider range of users without deepening their own understanding.

At the time, autoencoder deepfake systems (namely the now-defunct DeepFaceLab and the non-porn-centric FaceSwap, both derived from the disgraced and quickly banned Reddit code released in 2017) were the only games in town for creating relatively photorealistic human deepfakes.

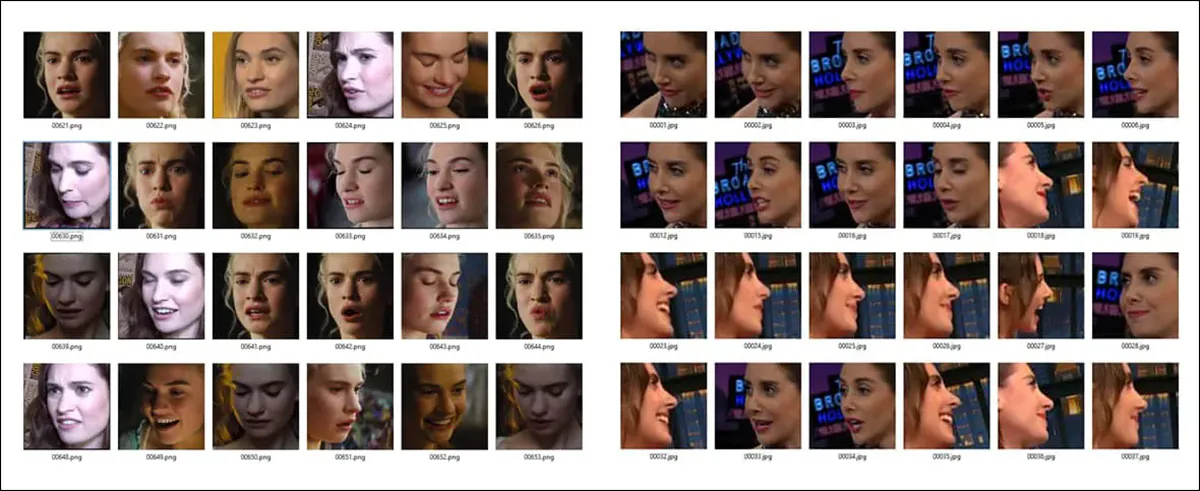

These systems relied on extensive facial training datasets intended to provide the AI model with information about A) what the person looks like at rest (standard reference embedding), and B) how the person looks in the various situations that the face reflects. sleep to laughter, horror, boredom, irony, sorrowetc.

Identity is not created alone, but together with facial expressions. Additionally, for a particular emotion, only facial data from a certain extreme angle may be available, and the emotion tends to be associated with that angle, and vice versa.

The problem was that since canonical identities typically have to be inferred from facial captures that are not themselves “neutral,” the preponderance of smiles and smiles obtained when scraping stock datasets would shift the distribution toward a “smile default.” This is because the web-scraped training data that informed these models typically included a large number of red carpet paparazzi shots, as well as other equally possible reasons why the dataset could be biased toward one type of image.

In other words, the autoencoder system must attempt to extract a “neutral” identity concept from thousands of images with distorted facial features. normal expression.

We also needed to disentangle the semantic face concepts of different emotions. From the angle the face was photographed. This means that if the only “fear” expression available is obtained from a profile view, the trained system can optimally reproduce that emotion only from that view.

facing forward

As diffusion-based approaches take over the generative AI image (and later video) scene from 2022 onwards, generative systems have become much better at extrapolating accurate facial expressions when provided with limited facial data.

Even the extremely difficult challenge of creating a convincing profile view has been largely overcome with current state-of-the-art technology, while facial data is very effectively slipstreamed from the identity, and the type of live deepfake puppetry pioneered by the autoencoder-driven DeepFaceLive streaming system has many effective offline dissemination applications, and real-time execution may be developed in the future.

Click to play. Various examples of driving avatars through source videos from the “FlashPortrait” project. In this case, it doesn’t matter which side the “realistic” domain is on. sauce

But as genAI’s canvas widens and its output becomes more sophisticated, the entanglement problem simply spreads to multiple other areas, and is now being “fixed” by a fairly cheap and fairly old trick. Even if you don’t know what these tricks are, you might be more positive about how video and image AI is rapidly evolving and overcoming old bugbears.

talkative cat

We hope this helps explain why the old autoencoder system from 2017 had a hard time separating identity and emotion. The reason is that a) there is too much of one type of data, or the version of one type of important data is too special, both of which can cause a skewed distribution. and/or B) the model architecture did not have the ability to separate these characteristics and tended to “glue” them together during inference unless the user took special care to ensure balance within the dataset.

For exactly the same reason, similar issues have arisen with many open source and proprietary video models in recent years, but have been overshadowed by larger criticisms regarding hallucinations, lack of censorship, and various other topics.

For example, in the Wan2.+ system, many users find it very difficult to stop the generated characters. talking constantlyand often it’s also difficult to stop them from looking at the camera.

The latter problem (staring at the camera or breaking the fourth wall) has been occurring in various image-only dissemination systems since the popularity of “staring at the camera” photos in web scraping datasets such as LAION, and pre-dates the advent of video composition systems.

The issue surrounding “talkative” characters comes from the “influencer” videos that are easily plentiful on YouTube. These videos naturally provide thousands of hours of first-hand discussion, often compiled into datasets that allow research scientists to launder web scraping by providing academic context.

However, unless the original curator or subsequent curators are careful to limit the number of videos of this type and balance it with a larger number of different types of footage, the video model will become seriously biased and this will need to be addressed through remedies on an immediate basis or through a variety of third-party ancillary systems.

Faced with Wan’s “chat” issue, Reddit user u/Several-Estimate-681 came up with a workaround that leverages the Wan 2.1 Infinite Talk V2V system’s settings. encourage Influencer-style talkativeness – allows the user to silence the rendered character.

Click to play: just listen – Workaround to achieve character attentiveness in Wan2.+. sauce

Obviously, shortcuts of this kind do not represent low-level architectural solutions, and unless a true solution is discovered and implemented by the creators of the underlying model (because casual hobbyists typically don’t have millions of dollars to recreate or tweak such work), this means that the “whack-a-mole” game of Tangled is likely to be reset to zero with the release of the next version.

cheap and easy to break

There is nothing in the diffusion architecture itself that makes these problems inevitable. In fact, if there were some way to apply truly effective curation, triage, and high-quality captions and annotations to hyperscale datasets with millions of data points, almost all of these problems would disappear.

However, this level of attention to detail is similar to the Manhattan Project in terms of logistics, scope, required resources, and long-term commitment. In a climate where new and even new architectures are emerging; version There is a possibility that a huge amount of such efforts could be reversed, but there is no intention to make these kinds of commitments at this time.

Therefore, the cheapest approach is still preferred as long as it is consistent with obtaining a usable model. One example of being “stingy” is data augmentation. This can lead to outrageous results if applied illegally to the wrong type of dataset video clip.

Because data augmentation often reverses the direction of the source video in the dataset, AI models can sometimes learn “impossible” movements. – sauce

But overall, stones rolling downhill and people destroying their personalities by turning on “influencer mode” tend to be seen as examples of collateral damage in a generative system that, despite such persistent goofs and Achilles’ heels, can be guided to produce impressive results and generate enough awe-inspiring headlines.

boilerplate solution

Currently, hundreds of generative video domains, almost all of them breaking a host of new laws in some way and increasing the backlash against GenAI, are enjoying a trough until law enforcement, blocklists, and other types of deplatforming remove these commercial services.

Large, high-profile sites of this type, such as Kling and Grok, tend to respond to criticism by either (eventually) adhering to some form of self-censorship or by changing the type of content the platform provides to its users.

But behind these big names, there are hundreds of other nightly operations, constantly meeting the demand for new (and often more extreme) types of content.

This type of low-effort provisioning eliminates the prohibitive cost and effort of training the underlying model from scratch. Very often, even the minor adjustments that would cost significantly less are no longer possible.

Therefore, these sites offer “templates” that actually work 100% identically to custom-trained LoRA. It has been used by AI enthusiasts for over four years to train dedicated LoRA appendages with desired identities, styles, objects, and (in the case of video LoRA) motions and actions.

With LoRA intervening between the user and the underlying model, the results obtained are highly specific to what LoRA was trained on. Also, the broad performance of the model is usually compromised by the weight bending effects of LoRA. LoRA reproduces its own subject matter very well, but it also ends up injecting its material into every request (if a fly-by-night GenAI video site allows this level of control, it’s not allowed, it’s just providing it) [ACTION OF YOUR CHOICE] (use templates and interpret input text/images/video in a way that gives the best chance of successful application of the template).

For obvious reasons, we cannot embed website samples in this article. However, recent research literature provides several similar examples. For example, here the EffectMaker project shows how it works, applying certain actions to images specified by the user.

Click to play. EffectMaker allows you to apply specific, fine-tuned effects to your custom inputs. sauce

Even in such highly selective and targeted situations, users often complain of having to perform multiple token write attempts to get good results, but this should not be attributed to the provider’s greed or shrewd practices, which is likely due to the innately “haphazard” DiT GenAI framework.

It could also be argued that the general public gets their impressions of GenAI’s capabilities from cherry-picked examples that are not representative of what the average novice user is likely to get. Even if users try six templates (i.e., LoRA provided by an AI website), they tend to publish and praise the best of them, conveying the impression that querying the underlying model yields such results, and conveying the impression that the generative underlying model is much more disentangled than it actually is.

conclusion

The literature continues to explore the problem of entanglement, which was first seriously considered around 2020 in a collaboration between Max Planck and Google. Calmly observing unsupervised learning of disentangled expressions and its evaluation.

Additionally, various successors Disentangling with contrast (DisCo) are popping up regularly, and the scene is alive with an awareness of issues that go far beyond the public’s understanding of what AI is. can’t do it Please do so in this regard.

A study from China in 2024 suggests that we may not need to solve tangles at all to solve the problems they pose. Historically, this is true. That’s because many difficult problems in computer vision have been overcome not by being solved, but by being overcome with completely new techniques and approaches.

Until such individual competitors emerge, we will likely need to apply hotfixes and Band-Aids to GenAI’s shortcomings and limitations, and live with public overestimation of the flexibility and ductility of the underlying model.

First publication date: Monday, March 23, 2026