These days of war highlight, perhaps more clearly than ever, the essential role that advanced science and technology play in our very survival. There is no way to know how many Israelis’ lives have been saved by missile defense systems developed in Israel, or how much greater constraints they would have placed on decision-makers, both here and in the United States, had there been no restrictions on defending the home front.

The same is true of the wide range of military and intelligence capabilities that have enabled Israel and the United States to achieve near-total control of Iranian airspace. During the Twelve Days War in June 2025, the Islamic Republic launched a missile attack on the Weizmann Institute of Science in Rehovot, one of the world’s leading research institutions, making it clear that it perceived Israeli science as a potential threat.

4 View gallery

These capabilities are also of global importance at a time when international stability is being undermined and institutions such as the United Nations are proving unable to fulfill their mandate to protect world peace. It is no coincidence that the World Economic Forum’s 2026 Global Risks Report identifies geoeconomic conflicts and state-based armed conflict as two key global risks in the coming years.

In a world where high-stakes political and security decisions are made under intense time pressure, uncertainty, and mortal danger, there are issues that require urgent attention. “Where are the women?” Their absence is felt not only in laboratories and R&D teams, but also in situation rooms, command centers, and other spaces where important decisions are made.

Women make up roughly half of Israel’s population, and their underrepresentation is not just problematic in crises like the one Israel has faced since the October 7 massacre and is likely to face again in the future. This is a serious handicap that impairs the quality of decision-making and impedes the ability to get a complete picture of a country’s social, security, and economy.

The question “Where are the women?” Nothing is more urgent. Artificial intelligence is emerging as a powerful force that will change the way we make decisions. In security, as in any other field, if we want to stay at the forefront of science and technology, we can’t afford to fall behind in the AI race. Language models and big data technologies are increasingly shaping the way we research, learn, understand, and make decisions.

That’s why the question of who does and does not sit at the tables where these technologies are developed, researched and managed is not just a technical question. This is a moral, social, and civic issue that lies at the very heart of science and the future of human knowledge.

4 View gallery

Artificial intelligence is not just a technical field. This is the knowledge infrastructure that shapes how we interpret scientific data, analyze social trends, advance medical research, educate students, develop medicines, develop policy, and run our economies.

AI systems are increasingly influencing decision-making in more areas of our lives. Their imprints can be seen in our courts and health care systems, in education, in decisions that affect climate and society, and even in fields such as psychology and history. As reliance on these models increases, so does the impact of the biases embedded in them.

AI models are trained on vast amounts of data to identify patterns and then apply those patterns to decision-making processes in situations for which they were not specifically trained. For example, an image recognition model can estimate the likelihood that a tumor will appear on a medical scan, and a language model can predict the next most likely word in the sentence or paragraph it generates.

Unlike humans, these models have no moral judgments or values. They just analyze the data they are given. The problem lies with the data itself. When data reflects unequal social realities or deep-rooted stereotypes and discriminatory assumptions, AI can absorb those biases and produce output that perpetuates and even reinforces discrimination. This is why uneven representation of women and men in AI training data can have significant social implications.

Among other concerns, the Global Risk Report 2025 highlights the dangers inherent in algorithmic bias in AI systems. The authors emphasized that AI models are one of the factors that could further deepen social polarization in the long term.

4 View gallery

Research shows that the underrepresentation of women in roles that shape the design and development of AI systems further deepens these biases. AI models are trained on large datasets that aim to capture the full range of phenomena they address. However, when these phenomena concern human society or fields such as medicine or education, the picture is distorted when women are underrepresented in the data themselves, or when women’s insights and experiences are missing from the development and monitoring of these systems. Artificial intelligence filters the information that reaches us. If that filter is biased, the knowledge it produces will also be biased.

Its influence extends far beyond the world of technology. It can also lead to serious scientific errors. For example, medical research based on data collected primarily from men may draw conclusions about women’s health or generate social classifications that reinforce harmful stereotypes. As leading companies and public institutions around the world increasingly rely on artificial intelligence for data analysis and decision-making, the lack of women in the design of these systems can lead to significant distortions and biases.

For example, women involved in the development and implementation of AI systems for disease diagnosis may be more sensitive than their male colleagues to the need to collect and analyze data that reflects female-specific symptoms, symptoms that do not necessarily align with those traditionally recognized in medical research, which has historically relied primarily on male subjects. This can reduce gender bias in data analysis and improve diagnostic accuracy.

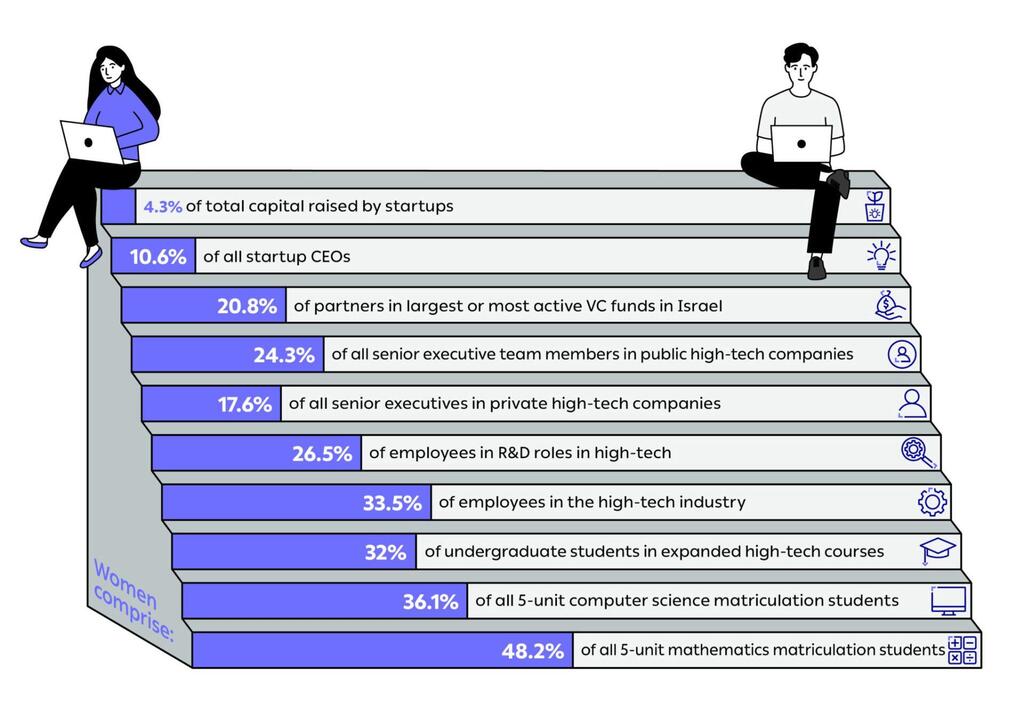

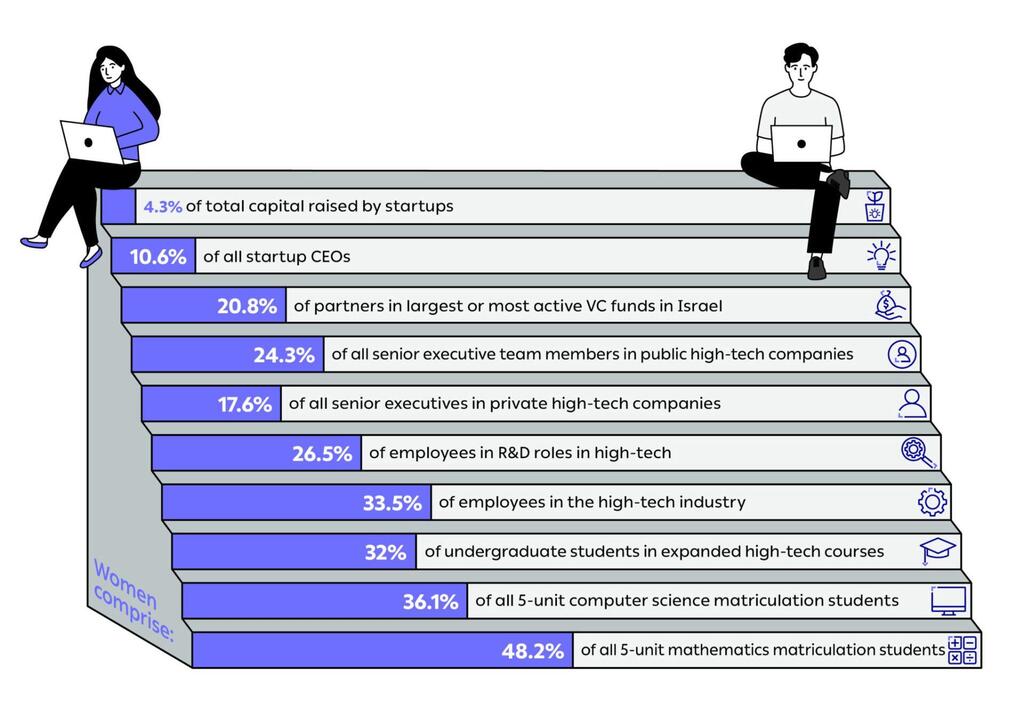

The Israel Innovation Authority’s 2025 report on the status of women in high-tech fields in Israel reveals significant disparities in women’s participation in science and technology. Girls are almost as likely as boys to study advanced mathematics in school (48.2 percent), and although the number of students taking computer science entrance exams is increasing, women still make up only 32 percent of science, technology, engineering, and mathematics (STEM) undergraduates. The gap is even wider at the highest levels of education, with only one in five full professorships in STEM fields being held by women.

4 View gallery

Women remain underrepresented across academia, high tech and the startup ecosystem

(Source: Status Report: Women in High Tech 2025, Israel Innovation Agency)

In high-tech industries, the disparity is even more pronounced. Women make up just over a quarter of employees in development roles, and the proportion decreases further as seniority increases. According to the report, only 10.6% of Israeli startup CEOs are women. This limited presence in decision-making positions also affects access to resources. Women-led startups receive just 4.3 percent of the total capital raised in this sector. In a country where artificial intelligence is rapidly reshaping science, the economy, and national security, the absence of women reflects not only a social imbalance but also a real cost to the quality of research and our ability to fully understand reality.

At the Davidson Institute for Science Education, the education division of the Weizmann Institute of Science, we are working to turn this insight into action. Research shows that girls and young women are attracted to learning environments that combine science with social studies, purpose, and creativity. That’s why our programs for middle and high school students combine interdisciplinary science with hands-on learning that combines applied research and AI applications.

When students understand that AI is a powerful research tool that goes beyond just coding and can be applied to a wide range of fields in science and everyday life, they can imagine themselves in the field and become active participants. The results speak for themselves. About half of the students at the Davidson Institute are girls.

This is part of a wider effort to encourage girls to get involved in science from an early age. But education alone is not enough. In Israel, as in many other countries, important decisions in science, technology and policy remain overwhelmingly dominated by men. Gender balance is not just a question of fairness, as scientific, medical, security, and social decision-making increasingly relies on recommendations generated by AI. It is essential for sound, rigorous and responsible decision-making.

Unless women play a substantive role in shaping these models, interpreting their results, and overseeing their use, the knowledge gained from them will remain incomplete and scientific accuracy itself will be compromised.

Integrating women into science, leadership, and security jobs is not just a social justice issue. It is a fundamental prerequisite for a future in which human knowledge, including science, education, medicine, public policy, economics, and above all security, reflects reality as fully and responsibly as possible. The more societies rely on AI-based systems to use them as lenses through which to understand the world, the greater the risk that gender bias will become deeply embedded in knowledge production and public decision-making processes.

Meaningful representation of women is also essential at all tables where decisions that affect the country as a whole are made, including in cabinets, negotiating teams and subcommittees. This is not a symbolic gesture, but a prerequisite for more responsible, careful and accurate policy decisions. The struggle before us is not just about the place of women in artificial intelligence. It’s about our collective future – about our security, and about the future of human knowledge itself.