Participants

Participants were recruited at Babirab with Uppsala University from a list of families who had previously expressed interest in participating in research with children. The family was contacted by email or phone and was then invited to the lab for an eye tracking session. Infants were included only when ages 12-14 months (+/- 2 weeks), had no uncorrected visual or hearing impairment, born after 36 weeks, and had no traumatic brain injury or neurological condition. The visit lasted about 20-30 minutes in total. After the eye tracking session, caregivers completed a demographic information survey. This study was approved by the Stockholm Regional Ethics Committee and conducted in accordance with the Declaration of Helsinki. Written informed consent was obtained from all caregivers.

In total, data were collected from 55 children. Five were then excluded due to technical issues, and four more were excluded in EMI condition due to insufficient number of valid trials (see visual acuity measurements in section). There was no significant difference between included and excluded children regarding age (T (53) = -0.425; p= 0.673), family income (t(46) = 0.278, p= 0.782), or parental education level (t(48) = 0.279; p= 0.782). The final sample consisted of 46 children in EMI condition and 50 children in GF condition. Because the primary analyses are related to EMI, we report demographic statistics based on the samples included in these analyses (Table 1). No ethnicity information was collected, but recruitment and all testing sessions were completed in Swedish. In other words, every infant had at least one caregiver who had fluent in Swedish.

Stimulation

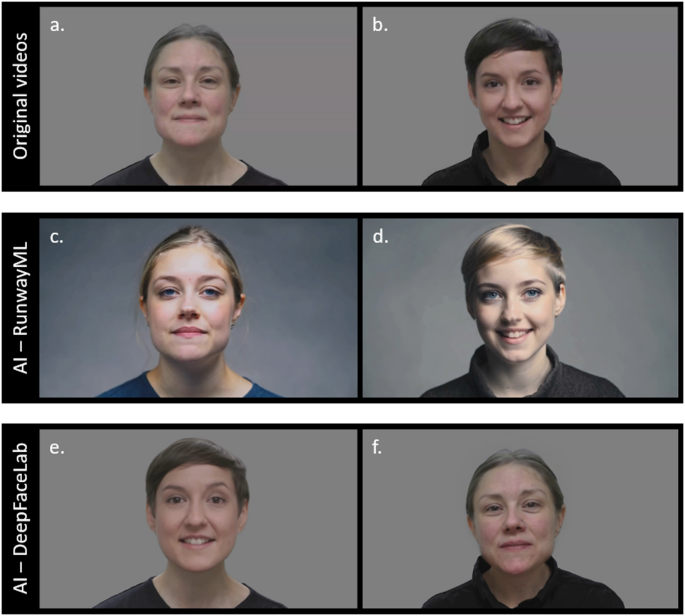

EMI stimuli consisted of two videos (Fig. 1a,b) previously used in studies of gaze behavior in infants (e.g.32). In each video, women sing the rhymes of a typical Swedish nursery. Based on the original video, I generated a new video using two different AI tools, RunwayML and DeepFacelab.

Two videos were created using runwayml gen-3 alpha online software (https://runwayml.com/, see Figure 1c,d). Text prompts, images, and videos can be used as input to generate videos. I used a combination of text prompts and video. See Supplementary Material S1 for the text prompts used in this study. The goal was to create two AI-operated videos that could be verified against the original recording. To minimize confounding factors that can affect child gaze behavior, videos that manipulate AI were designed to resemble the performances of actors in the original recording, showing some differences in appearance (such as different hair and eye colors).

Additionally, two videos were created using DeepFacelab, a software based on deep learning techniques to perform realistic face swapping on videos (Figure 2). These videos (Figures 1E, F) were shown to 31 participants out of the total sample. Stimuli were included to explore gazes to AI-operated materials that resemble the original video than stimuli created with RunwayML. All details regarding the creation of these videos can be found in Supplementary Material S2.

The AI video contained the same audio as the original video, and the video operated by the AI was slightly shorter than the original video (due to limitations of the AI software), so the first 10 seconds were analyzed for all videos (original and AI operations).

Video frames presented in EMI conditions. The original video is shown above and depicts a person (a) and person b (b); The version operated by the AI using runwayml will be displayed in the center (c, d), and ai-manipulated version using deepfacelab appears at the bottom (e, f). video e and f Generated by combining video elements a and b. in the case of eface from video b It was transferred to the person in the video a. in the case of fface from video a It was transferred to the person in the video b. Despite these changes, the sound and body movements of AI-controlled videos (c–f) It was still changed from the original target video (a, b). As a result, the audio and body movements are the same for all videos (each left and right) in each column. Both actors publish their identification images and videos and provide written informed consent to manipulate the videos/images using artificial intelligence.

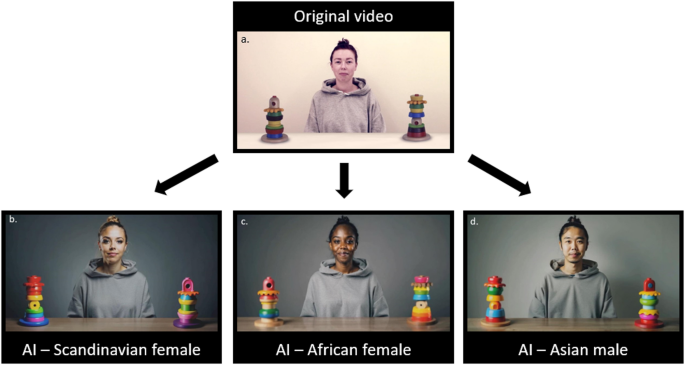

GF stimulation consisted of AI-operated videos (Figure 2B–D) created based on one original video (Figure 2a) that has been used in previous studies of GF (e.g.:18). Two versions have been created for each video. One is looking to the left and one looking to the left. In total, participants were presented with six GF videos (the original video was not included in the stimuli shown in the gaze tracking session). These videos were created using RunwayML Gen-3 Alpha Online software (see Supplementary Material S1 for the text prompts used). These videos were created to display a large variation of the generation that could be created based on one original video.

Video frames presented in GF conditions. Video on the top left (a) is original, but the rest is ai-manipulated (b–d). The actors published their identification images and videos and provided written informed consent to manipulate the videos/images using artificial intelligence.

Eye tracking measurement

Gaze data was collected using a Tobii TX300 eye tracker (120 Hz) integrated with a standard screen (24 inch). The infant was sitting in the parent's lap about 60 cm from the screen. The videos included in this study were scattered with other videos depicting social stimuli. Two versions of the experiment were created (versions A and B). The video presentations in version B were in reverse order as version A presentations. In both EMI and GF conditions, 56% showed version A and 44% showed version B.

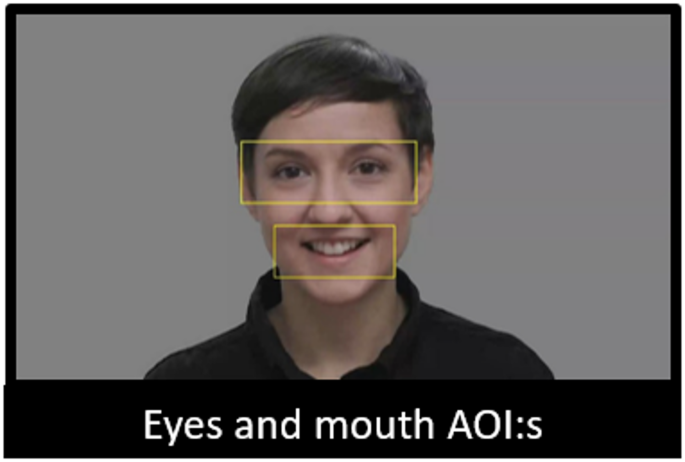

In the EMI condition, all areas of interest (AOI) were created by first analyzing the stimulus video with the open face of the software5detect faces via computer vision and extract face landmarks. I then used X-coordinates in pixels for various facial landmarks to create dynamic AOIs for each frame of the video. The eye AOI was 510 x 180 pixels and the mouth AOI was 350 x 150 pixels (see Figure 3). EMI was calculated based on the total eye and eye appearance time divided by the total eye and mouth appearance time. Additionally, I created a face AOI with a vertical radius of 300 pixels and 400 pixels.

Due to the time-consuming process of creating DeepFacelab videos, the first 15 participants featured two original videos for Person A, two original videos for Person B, and each person's runway ML AI video. To maintain the total duration of the experiment the same for all participants, the remaining participants showed one original video for each person and two AI videos for each person (made with RunwayML and DeepFacelab, respectively). The reason we don't include more videos for each person is the fact that these videos are scattered with other social videos (related to other research projects), and we wanted our children to keep their eyes off enough to focus on the stimulation.

The exam was classified as ineffective if participants saw their faces less than 2.5 seconds in the total exam period. For inclusion in further analysis, participants were required to perform at least one valid trial on the original videos of individual A and individual B, and one valid trial on each person's AI video. In total, four participants were excluded due to these criteria.

EMI scores were highly correlated with person A and person B between original videos (rTherefore, for both the original and AI videos, we combined the scores of videos depicting people A and B.

Frame from one of the original videos depicting the eyes and mouth aoi in EMI states

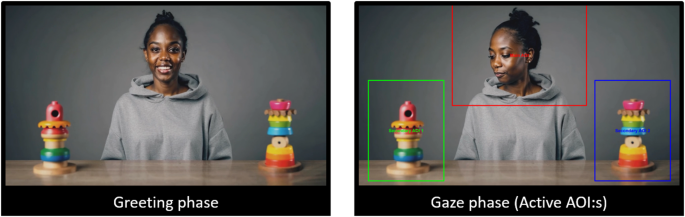

In the GF condition, three rectangular AOIs were created. One covers the actor (800 x 600 pixels) and two covers the toys (each covers 450 x 600 pixels; see Figure 4). GF was evaluated using the initial appearance paradigm. In this paradigm, the infant's first gaze shifted from the actor to either toy, recorded as a match or inconsistency. GF was calculated by subtracting the number of non-matched trials from the number of joint trials to result in a variance score. On six trials, this score ranges from -6 to 6 with a positive score indicating a large GF. If a child saw one object after seeing the person, the exam was classified as valid (regardless of whether it was the same object as the actor saw). At least two valid trials were required to be included in further analysis. This criterion does not exclude participants. In total, 50 participants were included in the GF analysis. The number of ineffective tests (the infant saw no toys) is presented separately for each ethnic group in Supplementary Information S3.

Frames from one of the AI-controlled GF task videos depicting the greeting phase using AOI (left) and the gaze phase (right)

Statistical analysis

I'll test it H1first performed Pearson correlations and assessed the association between EMI when watching the original video and the AI-operated video. We then calculated repeated measures ANOVAs that measure age and gender of RunwayML and DeepFacelab videos individually as covariates. Finally, repeated Bayesian measurement analyses were performed.

In relation to H2we aimed only to test whether confounding factors (e.g., acting between different ethnicities and AI-controlled videos) caused children to follow their gaze in the expected way. This was done using a 1-sample t-test for a chance level of 0.