The European Union's Artificial Intelligence Act has been approved by EU lawmakers and will come into force in 2024. The law aims to protect citizens' health, safety and fundamental rights from potential harm caused by AI systems.

The comprehensive EU AI law creates a tiered risk classification system with various regulations and tough penalties for non-compliance. For companies that want to use this law as a framework to improve their AI compliance strategy, it is essential to understand its key aspects and implement best practices.

Who does EU AI law apply to?

EU AI Law applies to any AI system on the market, in service or in use within the EU, which means that the law covers both AI providers (companies that sell AI systems) and AI implementers (organizations that use those systems).

The regulation applies to many types of AI systems, including machine learning, deep learning, and generative AI. Exceptions are made for AI systems used for military and national security, open source AI systems (excluding large-scale generative AI systems or foundational models), and AI used for scientific research. Importantly, the law applies not only to new systems, but also to applications that are already in use.

Key provisions of EU AI law

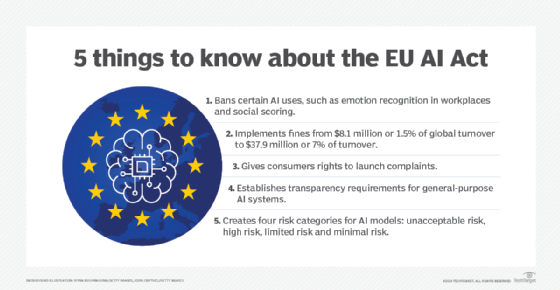

The AI Act adopts a risk-based approach to AI, categorizing potential risks into four levels: unacceptable, high, limited, and minimal. Compliance requirements are strictest for unacceptable and high-risk AI systems, and therefore, companies and organizations that want to comply with the requirements of this Act should pay more attention.

Examples of unacceptable AI systems include social scoring and emotional manipulation AI. The creation of new AI systems that fall into this category is prohibited, and existing systems must be removed from the market within six months.

Examples of high-risk AI systems include those used in employment, education, essential services, critical infrastructure, law enforcement, and justice. These systems must be registered in a public database and their creators must certify that they do not pose significant risks. This process includes meeting requirements related to risk management, data governance, documentation, oversight, and human oversight, as well as meeting technical standards for security, accuracy, and robustness.

While these detailed requirements for high-risk AI systems are still being finalized, the EU expects to publish more specific guidance and compliance timelines in the coming months. Companies developing or using high-risk AI systems should stay updated on these developments and prepare for implementation as soon as the final requirements are published.

Similar to the EU data privacy regulation GDPR, the AI Act imposes tough penalties for violations. Companies can be fined more than €30 million or 7% of their annual worldwide revenue, whichever is greater. The Act applies different penalties depending on the type of violation.

Enterprise Roadmap: 10 Steps to Compliance

The EU AI Law is the first of many potential AI regulations to come. There is still time to comply with the AI Law, and companies can get a head start by following a comprehensive set of steps. This approach will help companies avoid fines, ensure the distribution of their AI products and services, and serve customers in the EU and beyond with confidence.

1. Strengthening AI governance

As AI laws proliferate, AI governance is no longer a “nice to have” but a must for businesses. Use the EU AI Law as a framework to strengthen your existing governance practices.

2. Create an AI inventory

Catalog all existing and planned AI systems and identify your organization's role as an AI provider or AI deployer. Most end-user organizations are AI deployers, while AI vendors are typically AI providers. Assess the risk categories for each system and outline corresponding obligations.

3. Updating AI Procurement Practices

When selecting AI platforms and products, end-user organizations should ask potential vendors about their approach and roadmap to AI Act compliance. Revise procurement guidelines to include compliance requirements as required criteria in requests for information and requests for proposals.

4. Train cross-functional teams on AI compliance

Conduct awareness and training workshops for both technical and non-technical teams, including legal, risk, HR and operations. these Workshops should address the obligations that AI law imposes across the entire AI lifecycle, including data quality, data provenance, bias monitoring, adverse effects and errors, documentation, human oversight, and post-deployment monitoring.

5. Establish an internal AI audit

In finance, the internal audit team will be responsible for evaluating the organization's operations, internal controls, risk management, and governance. Similarly, for AI compliance, create an independent AI audit function to evaluate the organization's AI lifecycle practices.

6. Adapt risk management frameworks for AI

Extend and adapt your existing enterprise risk assessment and management framework to cover AI-specific risks. A comprehensive AI risk management framework will make it easier to comply with new regulations as they apply, such as the EU AI law and other upcoming global, federal, state, and local regulations.

7. Implement data governance

Ensure proper governance of training data used in general-purpose models such as large-scale language models and foundational models. Training data must be documented and compliant with data privacy laws such as GDPR and EU copyright and data scraping rules.

8. Increase AI transparency

While AI and generative AI systems are often described as black box tools, regulators prefer transparent systems. To increase transparency and interpretability, we will explore and develop explainable AI techniques and tool capabilities.

9. Incorporate AI into compliance workflows

Use AI tools to automate compliance tasks such as tracking regulatory requirements and obligations that apply to your organization. Examples of applications of AI in compliance include monitoring internal processes, maintaining an inventory of AI systems, generating metrics, documentation, and summaries, etc.

10. Proactively engage with regulators

Some of the details regarding compliance and implementation of the AI Law are still being finalized and changes are likely to occur over the coming months and years. If you have any questions or grey areas, please contact the European AI Office to clarify expectations and requirements.

Kashyap Kompella is an industry analyst, author, educator, and AI advisor to leading enterprises and startups across the US, Europe, and Asia Pacific. He currently serves as the CEO of RPA2AI Research, a global technology industry analyst firm.