Code embeddings are an innovative way to represent code snippets as dense vectors in a continuous space. These embeddings capture the semantic and functional relationships between code snippets, enabling powerful applications in AI-assisted programming. Similar to word embeddings in natural language processing (NLP), code embeddings place similar code snippets close together in a vector space, allowing machines to understand and manipulate the code more effectively.

What is code embedding?

Code embeddings convert complex code structures into numerical vectors that capture the meaning and functionality of the code. Unlike traditional methods that treat code as strings, embeddings capture the semantic relationships between pieces of code, which is critical for various AI-driven software engineering tasks such as code search, completion, and bug detection.

For example, consider the following two Python functions:

def add_numbers(a, b):

return a + b

def sum_two_values(x, y):

result = x + y

return result

Although these functions appear syntactically different, they perform the same operation. A good code embedding would represent these two functions with similar vectors, capturing the similarity of their functionality despite differences in the text.

Vector embedding

How are embed codes created?

There are various techniques for creating code embeddings. One common approach is to use neural networks to learn these representations from large code datasets. The network analyzes the code structure, including tokens (keywords, identifiers), syntax (the structure of the code), and sometimes comments, to learn the relationships between different code snippets.

Let's take a closer look at the process:

- Code as a sequenceFirst, a code snippet is treated as a sequence of tokens (variables, keywords, and operators).

- Neural Network TrainingA neural network processes these sequences and learns to map them to a fixed-size vector representation. The network takes into account factors such as syntax, semantics, and relationships between code elements.

- Capture the Similarities: The goal of this training is to place similar code snippets (with similar functionality) close to each other in vector space, which allows tasks like finding similar code and comparing functionality.

Here is a simplified Python example of how to preprocess your code for embedding:

import ast

def tokenize_code(code_string):

tree = ast.parse(code_string)

tokens = []

for node in ast.walk(tree):

if isinstance(node, ast.Name):

tokens.append(node.id)

elif isinstance(node, ast.Str):

tokens.append('STRING')

elif isinstance(node, ast.Num):

tokens.append('NUMBER')

# Add more node types as needed

return tokens

# Example usage

code = """

def greet(name):

print("Hello, " + name + "!")

"""

tokens = tokenize_code(code)

print(tokens)

# Output: ['def', 'greet', 'name', 'print', 'STRING', 'name', 'STRING']

This tokenized representation can then be fed into a neural network for embedding.

Existing approaches to code embedding

Existing code embedding methods can be divided into three main categories:

Token-based method

Token-based methods treat the code as a sequence of vocabulary tokens. Techniques such as Term Frequency-Inverse Document Frequency (TF-IDF) and deep learning models such as CodeBERT fall into this category.

Tree-based methods

Tree-based methods parse the code into an Abstract Syntax Tree (AST) or other tree structures to capture the syntactic and semantic rules of the code. Examples include tree-based neural networks and models such as code2vec and ASTNN.

Graph-based methods

Graph-based techniques build graphs from the code, such as control flow graphs (CFGs) or data flow graphs (DFGs), to represent the dynamic behavior and dependencies of the code. GraphCodeBERT is a notable example.

TransformCode: A Framework for Code Embedding

TransformCode: Unsupervised Learning of Code Embeddings

TransformCode is a framework that addresses the limitations of existing methods by learning code embeddings in a contrastive learning manner. It is encoder and language agnostic, allowing it to leverage any encoder model and process any programming language.

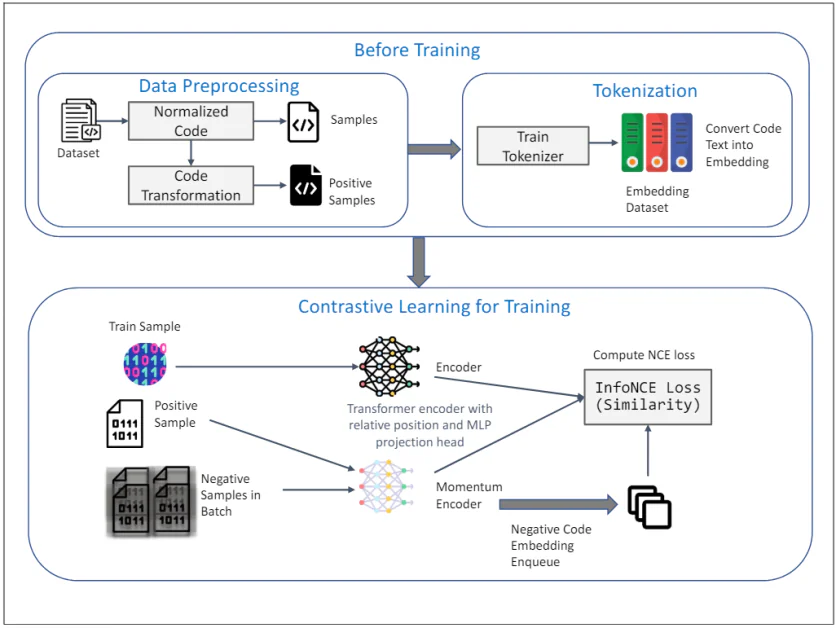

The diagram above shows the TransformCode framework for unsupervised learning of code embeddings using contrastive learning, which mainly consists of two phases. Before training and Contrastive learning for trainingA detailed description of each component follows:

Before training

1. Data preprocessing:

- data set: The initial input is a dataset containing code snippets.

- Normalized code: The code snippets are normalized, comments are removed, and variables are renamed to a standard form, which reduces the impact of variable naming on the training process and improves the generalizability of the model.

- Code conversion: The normalized codes are then transformed using various syntactic and semantic transformations to generate positive samples. These transformations ensure that the meaning of the codes is not altered and provide diverse and robust samples for contrastive learning.

2. Tokenization:

- Training the tokenizer: The tokenizer is trained on a code dataset and converts the code text into embeddings, which involves breaking down the code into smaller units, such as tokens, that can be processed by the model.

- Embedded Dataset: The trained tokenizer is then used to convert the entire code dataset into embeddings, which serve as the input for the contrastive learning phase.

Contrastive learning for training

3. Training process:

- Train sample: Samples from the training dataset are selected as query code representations.

- Positive samples: The corresponding positive samples are the transformed versions of the query codes obtained during the data preprocessing phase.

- Negative samples in a batch: Negative samples are all other code samples that are different from the positive samples in the current mini-batch.

4. Encoders and Momentum Encoders:

- Transformer Encoder with Relative Position and MLP Projection Head: Both the query and positive samples are input to a Transformer encoder, which incorporates relative positional encoding to capture the syntactic structure and relationships between tokens in the code. A Multi-Layer Perceptron (MLP) projection head is used to map the encoded representation into a low-dimensional space where a contrastive learning objective is applied.

- Momentum Encoder: A momentum encoder is also used and updated by a moving average of the parameters of the query encoder, which maintains consistency and diversity in the representation and prevents the collapse of the contrastive loss. Negative samples are encoded using this momentum encoder and queued for the contrastive learning process.

5. Contrasting learning objectives:

- Calculate the InfoNCE loss (similarity). The InfoNCE (Noise Contrastive Estimation) loss is computed to maximize the similarity between the query and positive samples and minimize the similarity between the query and negative samples. This objective ensures that the learned embeddings are discriminative and robust to capture the semantic similarity of code snippets.

The overall framework leverages the strengths of contrastive learning to learn meaningful and robust code embeddings from unlabeled data. The use of AST transformations and momentum encoders further improves the quality and efficiency of the learned representations, making TransformCode a powerful tool for a variety of software engineering tasks.

Key features of TransformCode

- Flexibility and adaptability: It can be extended to a variety of downstream tasks that require code representation.

- Efficiency and scalability: It does not require large models or extensive training data and supports any programming language.

- Unsupervised vs. supervised learning: It can be applied to both learning scenarios by incorporating task-specific labels or goals.

- Adjustable parameters: The number of encoder parameters can be adjusted based on the available computing resources.

TransformCode introduces a data augmentation technique called AST transformation, which applies syntactic and semantic transformations to original code snippets, generating diverse and robust samples for contrastive learning.

Application of code embedding

Code embeddings have revolutionized many aspects of software engineering by converting code from a textual format into a numerical representation that can be used by machine learning models. Some of the main uses include:

Code search improvements

Traditionally, code search has relied on keyword matching, which often produces irrelevant results. Code embeddings enable semantic search, where code snippets are ranked based on their functional similarity, even if they use different keywords. This greatly improves the accuracy and efficiency of finding relevant code within large codebases.

Smarter code completion

Code completion tools suggest relevant code snippets based on the context you're in. Leveraging code embeddings, these tools can understand the meaning of the code you're writing and provide more accurate and helpful suggestions, making your coding experience faster and more productive.

Automatic code fixes and bug detection

Code embeddings can be used to identify patterns that indicate bugs or inefficiencies in your code. By analyzing code snippets for similarity to known bug patterns, these systems can automatically suggest fixes or highlight areas that need further inspection.

Improved code summarization and documentation generation

Large codebases often lack proper documentation, making it difficult for new developers to understand how the code works. Code embedding allows you to create concise summaries that capture the essence of the code's functionality. This not only improves code maintainability but also facilitates knowledge transfer within development teams.

Improved code review

Code reviews are essential to maintaining code quality. Code embedding assists reviewers by highlighting potential issues and suggesting improvements. Additionally, it can facilitate comparison between different code versions, making the review process more efficient.

Cross-lingual code processing

The world of software development is not limited to a single programming language. Code embeddings show promise in facilitating cross-language code processing tasks. By capturing semantic relationships between code written in different languages, these techniques could enable tasks such as cross-programming language code search and analysis.